Why Your KYC Platform Needs a Policy Engine, Not Just a Risk Score

Risk scores tell you how risky a customer is. A policy engine tells the AI how to think about risk in the first place. This article explains why configurable compliance policies are the missing layer in most KYC platforms, and how they transform AI-driven risk assessments from generic outputs into institution-specific intelligence.

Most KYC platforms give you a risk score. A number between 0 and 100, derived from screening results, jurisdictional factors, and entity complexity. That number is useful. But it does not tell you how the platform arrived at it, whether it reflects your firm's specific risk appetite, or what should happen next.

The missing layer in most compliance technology is not better data or faster screening. It is a policy engine: a configurable system that defines how AI reasons about risk, what it searches for, what rules it applies, and how it weighs different factors. Without this layer, every firm using the same platform gets the same generic assessment, regardless of their regulatory context, client base, or risk tolerance.

This article explores why policy engines matter, what they should do, and why the firms that adopt them will build fundamentally stronger compliance functions.

The Problem with One-Size-Fits-All Risk Assessment

Consider two regulated firms. One is a Nordic bank focused on retail customers. The other is a management company overseeing alternative investment funds with investors across multiple jurisdictions. Both need to assess customer risk. But the factors that matter, the weight assigned to each, and the regulatory obligations they operate under are entirely different.

A generic risk scoring model treats these firms the same. It applies the same screening logic, the same adverse media keywords, the same thresholds. The Nordic bank gets flagged for risks that are irrelevant to its customer base. The management company misses risks that its regulators specifically require it to monitor.

This is not a technology problem. It is a configuration problem. The AI performing the assessment is capable, but it has no way of knowing what your firm's compliance policy actually says. This is the challenge that led us at Fidify to rethink how compliance platforms should be built.

What Is a Policy Engine?

A policy engine is a configuration layer that sits between your compliance policies and the AI that executes them. It translates your firm's risk appetite, regulatory obligations, and operational procedures into structured instructions that the AI follows consistently.

Rather than hardcoding risk logic into software, a policy engine lets compliance administrators define:

- What to search for. Which keywords, risk indicators, and adverse media terms are relevant to your specific business and regulatory environment.

- How to reason about risk. What analytical framework the AI should apply when evaluating a customer, including which factors to prioritise and how to weigh conflicting signals.

- What rules to enforce. Conditional requirements that trigger based on specific circumstances. If a customer meets certain criteria, particular documents or approvals are required.

- How to score risk. Which risk factors matter to your firm, what weight each carries, and what severity level each represents.

- What knowledge to consult. Reference materials, regulatory interpretations, and internal guidance that inform the AI's analysis.

The result is that two firms using the same platform produce different assessments for the same customer, not because the AI is inconsistent, but because each firm's compliance policy is different, and the AI respects that difference.

Why Static Configuration Is Not Enough

Some platforms offer basic configuration: adjust a few thresholds, toggle certain checks on or off, upload a watchlist. This is a start, but it falls short of what a genuine policy engine provides.

The limitation of static configuration is that it only controls parameters. It does not control reasoning. You can tell the system to flag customers with a risk score above 75, but you cannot tell it what factors should contribute to that score, how to handle conflicting signals, or what additional analysis to perform when specific risk patterns emerge.

A true policy engine gives compliance teams control over the AI's analytical process itself, not just the thresholds it applies after the analysis is complete.

The Building Blocks of a Compliance Policy

An effective policy engine breaks compliance policy into discrete, manageable components that can be configured independently and combined as needed.

Search Strategy

Every risk assessment begins with research. The quality of that research depends entirely on what the AI searches for. A policy engine lets compliance teams define specific keyword sets tailored to their risk environment (sanctions-related terms, industry-specific risk indicators, jurisdictional red flags) rather than relying on a generic search.

This matters more than it might seem. A firm focused on trade finance faces different adverse media risks than one focused on wealth management. The keywords that surface meaningful results for one are noise for the other.

Analytical Framework

How should the AI reason about the information it finds? This is where most platforms fall silent. They collect data, run it through a model, and produce a score. The analytical framework is buried in code, invisible to compliance teams, and identical for every client.

A policy engine makes this framework explicit and configurable. Compliance administrators define the analytical objectives, evidence requirements, and evaluation criteria that guide the AI's reasoning. This means the AI does not just produce a number. It produces an assessment that reflects your firm's specific approach to risk evaluation.

Conditional Rules

Regulatory compliance is full of conditional logic. If a customer is a politically exposed person, enhanced due diligence is required. If the transaction involves a high-risk jurisdiction, additional documentation must be collected. If the entity structure exceeds a certain complexity threshold, senior compliance approval is needed.

A policy engine captures these rules in a structured format that the AI can evaluate deterministically. This is important because conditional compliance requirements are not suggestions. They are obligations. The AI must enforce them consistently, every time, without relying on probabilistic judgment.

Risk Scoring Dimensions

Not all risk factors carry the same weight, and different firms prioritise different dimensions. A policy engine lets compliance teams define their own scoring framework: which factors to assess, how many points each can contribute, and what severity level each represents.

This separates two concerns that are often conflated. The AI evaluates each factor based on evidence. The scoring framework determines how those evaluations translate into an overall risk level. Changing the framework does not require changing the AI. It requires updating the policy.

Reference Knowledge

Every compliance team has institutional knowledge that informs their risk assessments: internal policies, regulatory guidance, jurisdictional interpretations, case studies from past reviews. A policy engine makes this knowledge available to the AI as structured reference material.

This is fundamentally different from fine-tuning a model. Fine-tuning is expensive, opaque, and requires technical expertise. Injecting reference knowledge through a policy engine is immediate, transparent, and controlled entirely by compliance teams.

From Uploaded Documents to Configured Policies

One of the most practical capabilities a policy engine can offer is the ability to convert existing compliance documents into structured policy configurations.

Most regulated firms already have detailed AML policies, KYC procedures, and risk appetite frameworks, typically as PDF documents that compliance officers reference manually. In Fidify's platform, the policy engine can extract the relevant content from these documents and convert it into structured rules, keyword sets, analytical guidance, and risk factors.

This bridges the gap between what your firm's compliance policy says and what your technology does. Instead of compliance officers translating policy documents into software configurations manually (a process that is slow, error-prone, and difficult to keep current) the policy engine handles the translation automatically.

The extracted content remains traceable back to the source document, section, and page number. This means every rule the AI enforces can be linked to the specific policy provision that requires it, a capability that is invaluable during regulatory examinations.

Curious how this works in practice? See how Fidify turns compliance documents into structured policy rules.

Why Versioning Matters

Compliance policies change. Regulations update, risk appetites shift, and new requirements emerge. A policy engine must handle this reality gracefully.

The critical requirement is immutable versioning. When a policy is updated, the previous version must be preserved, not overwritten. Every risk assessment produced under the old policy must remain permanently tied to the exact policy version that was in effect when it was conducted.

This is not a technical nicety. It is a regulatory necessity. When a regulator reviews a historical assessment and asks what policy governed the decision, the answer must be precise and verifiable. The policy that applies today is irrelevant to an assessment conducted last year.

Immutable versioning also supports internal audit. Compliance teams can reconstruct the exact policy state at any point in time, understanding not just what decision was made, but what rules were in place when it was made.

Separating Scores from Regulatory Triggers

One of the most important design decisions in a policy engine is how to handle the relationship between risk scores and regulatory triggers.

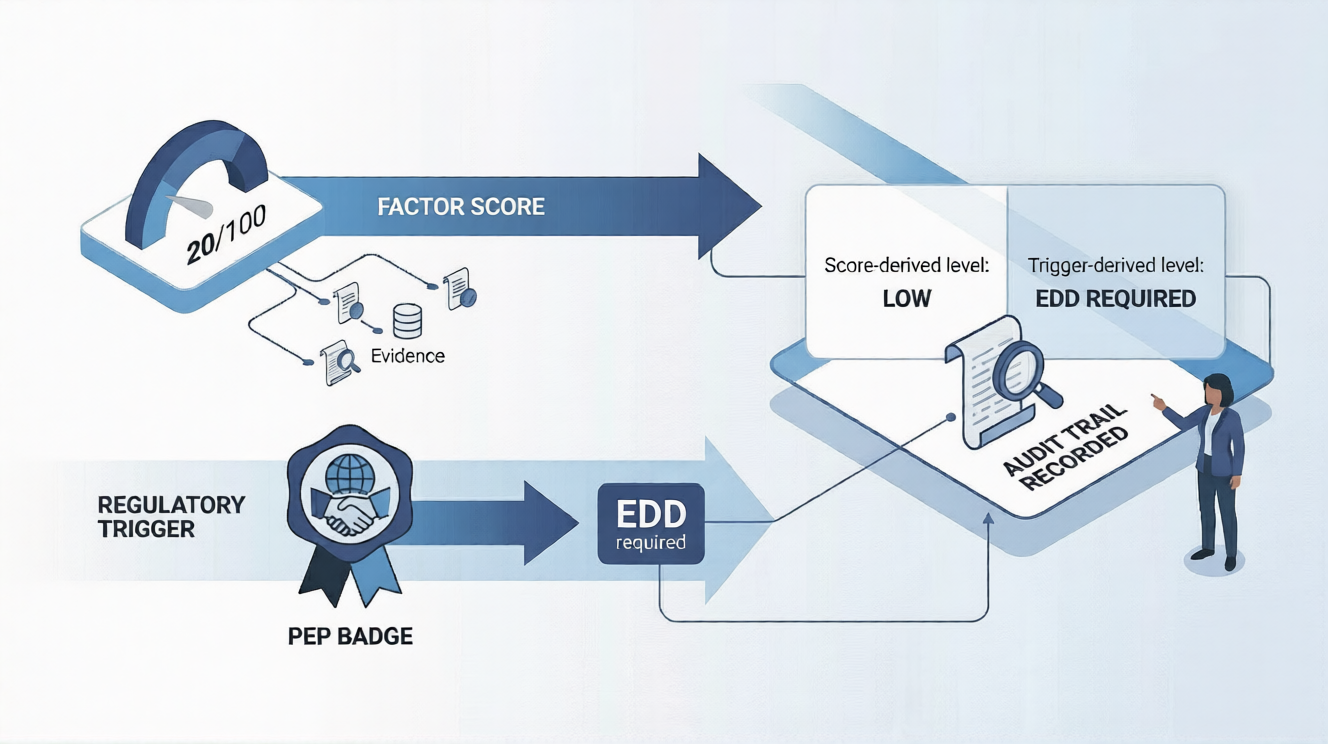

Consider a customer whose risk factor scores are uniformly low. Geographic risk is minimal, business activity is straightforward, no adverse media. The AI produces a risk score of 20 out of 100. By any factor-based analysis, this is a low-risk customer.

But the customer is a politically exposed person. Under AMLR Articles 40 through 44, PEP status is a mandatory enhanced due diligence trigger. The final risk level must be HIGH, regardless of what the factor scores say.

A naive implementation would simply override the score, changing 20 to 75, or whatever threshold maps to HIGH. This produces a report where the factor analysis says one thing and the final score says another. It is internally inconsistent and difficult to explain to a regulator.

A well-designed policy engine separates these concerns. The factor-based score remains untouched: the AI's analytical work is preserved exactly as computed. Regulatory triggers are evaluated separately and deterministically. The final risk level records both the score-derived level and the trigger-derived level, along with which source determined the final rating.

This transparency is not optional. Regulators expect firms to explain their risk ratings. A compliance officer must be able to point to the specific trigger that elevated the rating and explain why it overrode the factor-based assessment. Without clear separation, this explanation is impossible.

Reusability Across Assessment Types

A mature compliance function does not assess risk in a single dimension. Individual customers, corporate entities, and counterparties all require risk assessment, but the criteria, factors, and regulatory requirements differ for each.

A policy engine should support this naturally. The same keyword set for sanctions screening can be attached to both individual and entity assessment policies. The same compliance rules can apply across assessment types where the regulatory requirement is universal. But the analytical framework and risk factor weights can differ to reflect the distinct nature of each assessment.

This reusability reduces duplication and ensures consistency. When a keyword set is updated, it takes effect across every policy that references it, eliminating the drift that occurs when the same information is maintained in multiple places.

What This Means for Compliance Teams

The shift from static risk scoring to policy-driven assessment has practical implications for how compliance teams operate.

Compliance officers become policy architects. Instead of being consumers of generic risk outputs, compliance teams define the rules, factors, and analytical frameworks that govern how AI assesses their customers. This is a more strategic role that aligns with how regulators expect compliance functions to operate.

Regulatory change becomes a configuration change. When new regulations take effect or existing ones are updated, the response is a policy update, not a software development project. Compliance teams can adapt their assessment logic without waiting for vendor release cycles.

Audit preparation becomes straightforward. With versioned policies, traceable rules, and transparent scoring, the materials needed for a regulatory examination are built into the system rather than assembled after the fact.

Institutional knowledge is preserved. When an experienced compliance officer leaves, their understanding of the firm's risk approach does not leave with them. It is captured in the policy configuration, available for their successor to review, understand, and build upon.

The Road Ahead

Policy engines for compliance are still a relatively new concept. Most firms are at the early stages, using AI for screening triage or basic risk scoring, with limited ability to configure how the AI reasons about risk.

But the direction is clear. Regulators are demanding risk-based approaches that reflect each firm's specific circumstances. Generic, one-size-fits-all compliance technology cannot meet this expectation. The firms that invest in configurable policy infrastructure will be better positioned to satisfy regulators, serve customers, and scale their compliance operations without proportional headcount growth.

The risk score is not going away. But it is becoming one output among many, and the policy engine that produces it is becoming the real competitive advantage. At Fidify, we are building that engine so compliance teams can focus on what they do best: exercising expert judgment, not wrestling with inflexible software.

Want to explore how policy-driven compliance works for your firm? Talk to our team about a policy engine walkthrough.